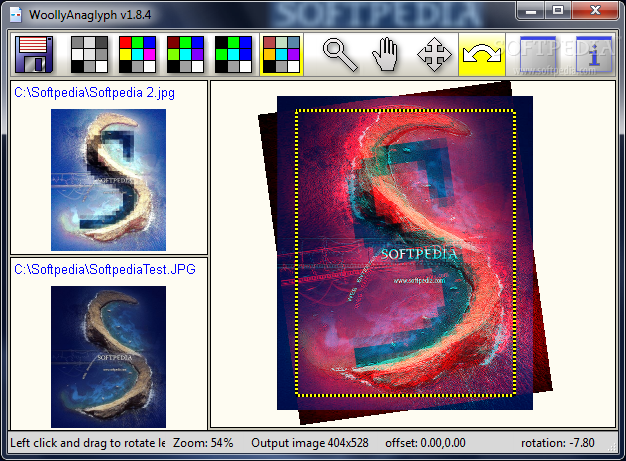

Objects, the mismatch for a nearby object tells how close this object is. Whereas the left-eye and right-eye view may coincide perfectly for far-away Information is then apparent from something called "disparity", the small visualĭisplacements of the red and green/cyan color components for nearby objects. Slightly different views into one perceived three-dimensional view. The human brain subsequently combines the Transmits only the left-eye red image while the green filter in front of the rightĮye transmits only the right-eye image. The red filter in front of the left eye blocks the green or cyan component and See the image in 3D by looking at the anaglyph through red-green glasses: Next, the two differentlyĬolored views are overlaid on top of each other. The right-eye view is taken through a green or cyan filter. For instance, the left-eye view may be taken through a red filter while Is an image that is created by combining two viewpoints through different colorįilters. The vOICe binocular processing uses so-called anaglyphic video input. Moreover, by now knowing distances and apparent (angular) sizes in theĬamera view, the user can deduce the actual sizes of nearby objects without firstģD anaglyph for sighted viewing with red/green glasses Obtained from two different simultaneous viewpoints, the distances to nearby By comparing the slight differences in images Powerful and reliable method is to make use of binocular vision, also called While moving around (this is what people blind in one eye do as well), but a more Is to derive size and distance information from video sequences of a single camera The required recognition of objects remains a daunting task. In only one eye do all the time, without apparent effort, but for machine vision, World to derive distances from recognized objects. Out to be extremely hard, because the distance information in a static camera view isĮssentially ambigious and requires much a priori knowledge about the physical Warning signal when there is a collision threat. In designing an electronic travel aid (ETA) for the blind, a key advantage ofĪ sonar device over a camera used to be that it is relativelyĮasy with sonar to measure distances to nearby obstacles, allowing one to generate a Vision substitution and synthetic vision features. The vOICe as an ETA (Electronic Travel Aid) for the blind in addition to its general This possible, and will thus further enhance the applicability and versatility of Perception in order to detect nearby objects and obstacles.

Setup, but the mobility component can benefit from better depth and distance Users, the orientation component is supported well by the standard single camera

In orientation and mobility applications for blind This page discusses how The vOICe technology supports binocular vision with suitable Setup Wizard (formerly named Novo - Minoru Webcam Setup Wizard) program to make the two camera views Note that you must use the Minoru 3D Webcam (formerly named Novo - Minoru) in the menu Devices.

ANAGLYPH WORKSHOP FOR WINDOWS DRIVER

Using Microsoft AMCAP, the anaglyph driver shows up as "Minoru 3D Webcam" However, it does work with Microsoft Directshow, and therefore the same approachĪs described below for HeavyMath Cam 3D can be applied, using active window sonification.

ANAGLYPH WORKSHOP FOR WINDOWS WINDOWS

Unfortunately, the webcam cannot interfaceĭirectly with the binocular vision features of The vOICe for Windows because it still lacksĪ Video for Windows compliant video driver, while its Novo anaglyph driver also does not show upĪs a WDM driver. (Minoru3D) from Novo generates anaglyph video, and anaglyph video input is exactly what The vOICeĮxpects for its stereoscopic vision and depth mapping. There is an affordable 3D webcam on the market: the Augmented Reality: Stereoscopic Vision for the Blind Stereoscopic Vision for the Blind Binocular vision support for The vOICe auditory display